by Christian Sawyer, M.Ed., BCBA, LBS, IBA

Perceived inauthenticity occurs when someone appears to belong to a group, adopting visible or behavioral traits like clothing, language, or voiced experiences, but is judged as failing to align with its in-group values, creating tension. We can see this in all kinds of subcultures, including even in online mental health spheres. Picture a music fan at a concert wearing a band shirt but unfamiliar with their discography, or a member of an online ADHD support group claiming the diagnosis but sharing inconsistent anecdotes. What does their being in that space entail? What does it mean to be in the group? These individuals occupy a liminal space, neither fully out-group nor accepted as in-group by all of the members, which in some cases risks challenging group identity. Social Identity Theory (Tajfel & Turner 1979) explains this, suggesting that individuals derive self-esteem from valued group memberships, favoring their group over others to maintain positive distinctiveness, and a sense of being unique and superior through that acceptance. When someone seems inauthentic, it threatens this distinctiveness, prompting members to highlight the behaviors or signals that appear out of line, to preserve group cohesion.

This dynamic is amplified by entitativity (that’s a nice big word), the perception of a group as a unified entity (Bougie et al. 2022). In subcultures, like vegan communities or music fan groups, members share goals, such as ethical living or DIY music support. In mental health communities, like forums for depression, members bond over shared struggles and coping strategies. For some groups, an individual perceived as inauthentic, either “trying too hard” or “not being ___ enough”, disrupts this cohesion, threatening the group’s unified identity. Bougie et al. (2022) note that high-entitativity groups become protective, often rejecting perceived outsiders. Those seen as inauthentic, being partially aligned, pose a unique challenge, their presence blurs boundaries, leading members to want to emphasize a definition of what unity and being a “true member” means for that subculture.

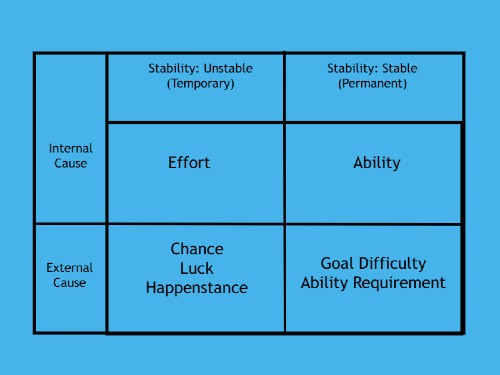

There are some strong theories as to the psychological mechanisms which drive these reactions. Social Comparison Theory (Festinger 1954) suggests that groups define their identity by comparing themselves to others. Subcultures, like music fans or digital nomads, distinguish themselves from mainstream society by rejecting consumerism or commercial music, while mental health communities, like those for anxiety, differentiate themselves from those without the diagnosis, emphasizing shared experiences. An individual seen as posing or imitating (viewed as inauthentic), undermines this distinctiveness, prompting members to call out their inauthenticity to reaffirm high-entitative group identity. Cognitive dissonance (Festinger 1957) also contributes, arising when a mismatch between someone’s presentation and actions creates discomfort. Labeling such individuals as inauthentic, or posing, resolves this discomfort by performatively excluding them, reinforcing group cohesion.

These social drivers manifest in behaviors that enforce norms, clarify boundaries, and navigate authenticity tensions, with distinct expressions in subcultures and mental health communities. Norm enforcement and conformity (even in the very groups intended and claiming not to be) ensure adherence to expectations, like veganism’s dietary rules, punk DIY ethos, or mental health communities’ focus on symptom expression. Research on social exclusion (Cristini et al. 2018) shows that fear of rejection encourages conformity within the group. Highlighting perceived inauthenticity enforces norms prompting others to discuss obscure topics, or challenging others to share experiences which align with deep in-group experience and expertise in the culture. Socialization reinforces this, with subcultures teaching examples of in-group expectations; specific in-group ethical norms, subculture specific histories, or inclusion into subculture-specific collective trauma narratives.

Those seen as inauthentic face direct comments or disengagement, driving them to double down and learn the groups behaviors and attitudes rather than the precepts and personal motivators that brought them to the group in the first place (Cristini et al. 2018).When these pressures emerge, those seeking inclusion in a space of shared interests or perceived shared experiences are, for all intents and purposes, pressured to become even more aligned with the groups behaviors and signals or risk exclusion or being called out. It’s no longer about the topic, it is now a game of buy-in to a specific set of group perceptions and rules about what “it” is.

Social exclusion is used to clarify those group boundaries, as Social Identity Theory (Tajfel & Turner 1979) emphasizes, distinguishing in-group from out-group members. A music fan who only knows mainstream hits or a vegan occasionally consuming dairy might be shunned, just as an Autism mental health group member claiming a diagnosis without hallmarked symptoms might be questioned publicly. These individuals, being partially aligned, are more disruptive than outsiders, leading to more visceral reactions from in-group enforcers who feel these boundaries the most. For some within the group, these “line blurers” are even more of a stick out than out-group members (“posers” vs. “normies”). Exclusion can escalate, fostering gatekeeping in these communities or alienation in online forums, risking isolation of support-seekers, or redefining the group concepts themselves pulling them farther from the original ideas to more of a rigid subculture (often the very thing that the individuals came to avoid). Online interactions amplify responses, with social media enabling public callouts. Communication Accommodation Theory (Joyce & Harwood 2023) suggests non-accommodative communication targets outsiders, like memes or comments exposing a targeted individual’s lapses in group conforming behavior as a form of shaming. In mental health communities, anxiety forums might challenge members claiming severe symptoms but posting carefree content, using snarky hashtags like “#NotReallyStruggling”. Joyce and Harwood (2023) note that callouts strengthen bonds but foster hostility, tightening norms through group polarization. Symbolic actions, or identity performances, prove authenticity (Bougie et al. 2022), like music fans wearing rare merch, vegans showcasing sustainable practices, or depression group members sharing therapy updates, or disclosing personal difficulties. Fear of being seen as inauthentic drives these actions which could even lead to escalating cycles of performative behavior (Cristini et al. 2018).

Contextual differences shape these dynamics. In subcultures, authenticity involves cultural knowledge or lifestyle commitment, with inauthenticity seen as cultural betrayal, protecting identity through exclusion. In mental health communities, authenticity centers on lived experience, with perceived inauthenticity undermining shared struggles, but exclusion risks personal and interpersonal harm to the targeted individuals. The line between personal authenticity becomes blurred with the group definition of what “authenticity” means to them. Being oneself with interests or partial matches to in-group factors may not pass muster. Personal authenticity becomes considered inauthentic to the intragroup.

Within interest based sub-cultures, call outs and exclusion can be shaming and discouraging to individuals.

Within groups of “mental health communities” the damage can be even greater.

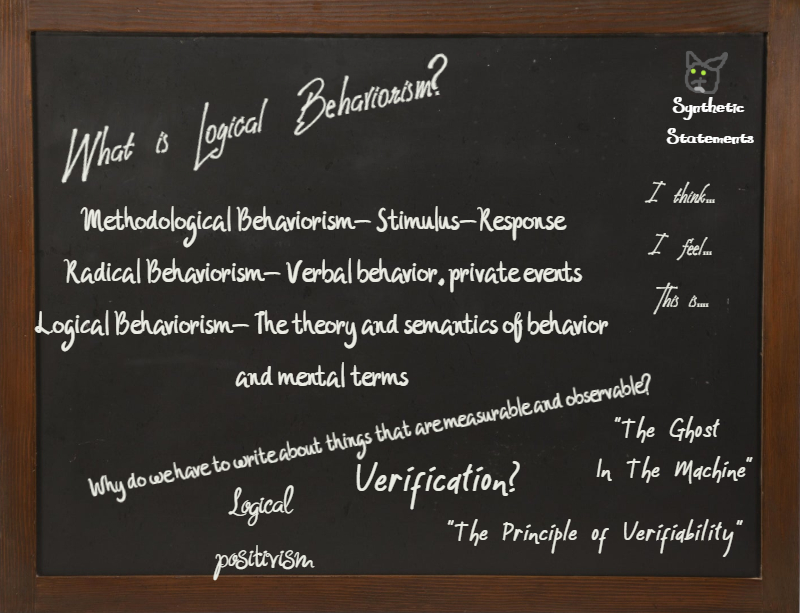

Mental Health Diagnostic Communities (a phrase we’ll use to describe a gathering of individuals with, or claiming, a shared diagnostic label) may have these risks arising from social pressure leading to inaccurate claims to disorders or symptoms (or even mimicking them), unlike subcultures where risks are purely cultural or interest based. Identity overreliance and stigma reinforcement occur when strong identification entrenches illness identities and pathology (Tajfel & Turner 1979). Excluding “inauthentic” members (those that don’t entirely align with group expectations) reinforces these rigid norms, pressuring symptom amplification, worsening distress, and stigmatizing atypical experiences, alienating support-seekers (Bougie et al. 2022). The inauthentic act becomes the “authentic” signals the group’s enforcers intended to shape. Social pressure to conform drives members to exaggerate symptoms or mimic stereotypical behaviors to gain acceptance, hindering authentic expression, discouraging professional help, and fostering validation dependency (Cristini et al. 2018). Behavioral interventions, like reinforcing adaptive behaviors, could counter this, but community pressure often overrides clinical approaches. Inaccurate claims to disorders, driven by social media or belonging desires, trivialize struggles, erode trust, and spread misinformation, with dissonance prompting exclusion (Festinger 1957). We can also see the undermining of therapeutic goals for those getting treatment when focus on group acceptance conflicts with clinical approaches delaying recovery. Recovery and improvement contrasts with subculture values, and the risk of exclusion reinforces boundaries regardless of actual clinical/psychological consequences. True opportunities for wellness get overlooked due to the targeted social pressures of group inclusion.

Where do we go from here? How can someone take part without risking losing their individual interests and actual purposes there? True authenticity is often key. Taking a moment to assess personal values and how they align with the group is also key. Is it still a place worth being? Is the subculture beneficial to the individual? Emphasizing individual authenticity in alignment with the subculture or group may at first glance appear to blur the lines, but following rigid enforcement comes with even greater risks. Subcultures could gatekeep newcomers, preserving identity, while mental health communities risk alienating help-seekers, exacerbating stigma, distress, or misinformation due to social pressure and inaccurate claims. While these groups can have amazing benefits, we also have to be aware of how in-group/out-group thinking pervades even the places intended to be refuges.

Comments? Questions? Feel free to comment.

References

Bougie, E., et al. (2022). Entitativity and collective esteem. British Journal of Social Psychology.

Cristini, F., et al. (2018). Global brain dynamics during social exclusion. Frontiers in Neuroscience.

Festinger, L. (1954). A theory of social comparison processes. Human Relations.

Joyce, N., & Harwood, J. (2023). Accommodation and intergroup attitudes on social media. Journal of Computer-Mediated Communication.

Tajfel, H., & Turner, J. C. (1979). An integrative theory of intergroup conflict. In W. G. Austin & S. Worchel (Eds.), The Social Psychology of Intergroup Relations. Brooks/Cole.

Image Credits:

pexels stock images

Icon For Hire – Get Well (Music Video)